- HOME

- VENUE

- RSVP

- REGISTRY

- CONTACT

- Omnifocus 3 pro coupon

- Sublime genre

- Resize image for discord emoji

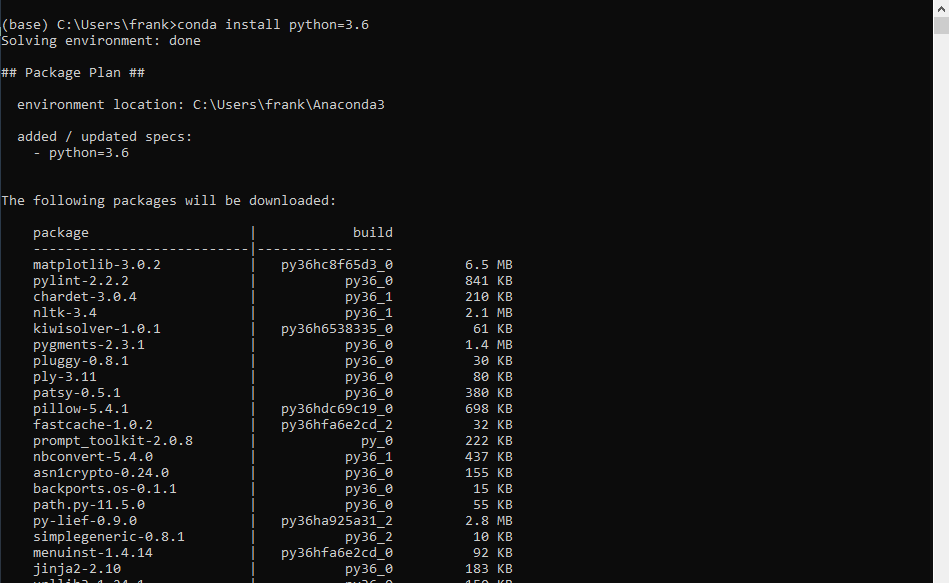

- How to install pyspark anaconda libraries

- Powerpoint 2016 free download code

- Miracast widi windows 10

- How to download hello neighbor for tablet

- Spintire mudrunner mods

- How to reformat a usb drive from another operating system

If PySpark installation fails on AArch64 due to PyArrow Note for AArch64 (ARM64) users: PyArrow is required by PySpark SQL, but PyArrow support for AArch64 If using JDK 11, set =true for Arrow related features and refer Note that PySpark requires Java 8 or later with JAVA_HOME properly set. To install PySpark from source, refer to Building Spark. To create a new conda environment from your terminal and activate it, proceed as shown below:Įxport SPARK_HOME = ` pwd ` export PYTHONPATH = $( ZIPS =( " $SPARK_HOME "/python/lib/*.zip ) IFS =: echo " $ " ): $PYTHONPATH Installing from Source ¶ Serves as the upstream for the Anaconda channels in most cases). Is the community-driven packaging effort that is the most extensive & the most current (and also

The tool is both cross-platform and language agnostic, and in practice, conda can replace bothĬonda uses so-called channels to distribute packages, and together with the default channels byĪnaconda itself, the most important channel is conda-forge, which The second downloads the backend jar file, which is too large to be included in the pip package, and installs it to the GeoPySpark installation directory.

#HOW TO INSTALL PYSPARK ANACONDA LIBRARIES CODE#

The first command installs the python code and the geopyspark command from PyPi. Using Conda ¶Ĭonda is an open-source package management and environment management system (developed byĪnaconda), which is best installed through To install via pip open the terminal and run the following: pip install geopyspark geopyspark install-jar. It can change or be removed between minor releases. Note that this installation way of PySpark with/without a specific Hadoop version is experimental. You should set your PYSPARKDRIVERPYTHON environment variable so that Spark uses Anaconda. conda install -c conda-forge/label/cf202003 pyspark. To ensure Zeppelin uses that version of Python when you use the Python interpreter, set the zeppelin.python setting to the path to Anaconda. conda install -c conda-forge/label/cf201901 pyspark. Who is this for This example is for users of a Spark cluster that has been configured in standalone mode who wish to run a PySpark job. To install this package with conda run one of the following: conda install -c conda-forge pyspark. This example runs a minimal Spark script that imports PySpark, initializes a SparkContext and performs a distributed calculation on a Spark cluster in standalone mode. Without: Spark pre-built with user-provided Apache HadoopĢ.7: Spark pre-built for Apache Hadoop 2.7ģ.2: Spark pre-built for Apache Hadoop 3.2 and later (default) Running PySpark as a Spark standalone job. Supported values in PYSPARK_HADOOP_VERSION are: Please enable JavaScript to view the comments powered by Disqus.PYSPARK_HADOOP_VERSION = 2.7 pip install pyspark -v

You can run a simple line of code to test that pyspark is installed correctly: That's it! There is no need to mess with $PYTHONPATH or do anything special with py4j like you would prior to Spark 2.2. You can additionally set up ipython as your pyspark prompt as follows:Įxport PYSPARK_DRIVER_PYTHON=~/anaconda/bin/ipython Set the following environment variables:Įxport SPARK_HOME=~/spark-2.2.0-bin-hadoop2.7Įxport PYSPARK_PYTHON=~/anaconda/bin/python pip install pyspark If you want to install extra dependencies for a specific component, you can install it as below: Spark SQL pip install pyspark sql pandas API on Spark pip install pyspark pandasonspark plotly to plot your data, you can install plotly together. Point to where the Spark directory is and where your Python executable is here I am assuming Spark and Anaconda PythonĪre both under my home directory. Note that the py4j library would be automatically included. Starting with Spark 2.2, it is now super easy to set up pyspark.ĭownload the spark tarball from the Spark website and untar it: pyspark package in python ,pyspark virtual environment ,pyspark install packages ,pyspark list installed packages ,spark-submit -py-files ,pyspark import packages ,pyspark dependencies ,how to use python libraries in pyspark ,dependencies for pyspark ,emr pyspark dependencies ,how to manage python dependencies in pyspark ,pyspark add.